You’re seeing this when connecting via SSH:

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

@ WARNING: REMOTE HOST IDENTIFICATION HAS CHANGED! @

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

IT IS POSSIBLE THAT SOMEONE IS DOING SOMETHING NASTY!

Someone could be eavesdropping on you right now (man-in-the-middle attack)!

It is also possible that a host key has just been changed.

The fingerprint for the ED25519 key sent by the remote host is

SHA256:xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx.

Please contact your system administrator.

Add correct host key in /home/user/.ssh/known_hosts to get rid of this message.

Offending key in /home/user/.ssh/known_hosts:47

Host key verification failed.SSH is refusing to connect because the server’s host key doesn’t match what’s stored in your known_hosts file. This is a security feature — but it also triggers after legitimate server changes.

Before You Fix This: Security Check

Stop and think. This warning exists to protect you from man-in-the-middle attacks. Before removing the old key, ask yourself:

- Was this server recently rebuilt, reimaged, or migrated?

- Did the server’s OS get reinstalled?

- Did the IP address change to point to a different machine?

- Did someone rotate the SSH host keys?

If the answer to ALL of these is “no”, investigate further before proceeding. Someone may be intercepting your connection.

If you know the server changed legitimately, continue with the fix below.

Fastest Fix: Remove the Old Key and Reconnect

# Remove the old host key for a specific hostname

ssh-keygen -R hostname.com

# Or remove by IP address

ssh-keygen -R 10.0.1.50

# Or remove both (if you connect by both name and IP)

ssh-keygen -R hostname.com

ssh-keygen -R 10.0.1.50

# Now reconnect — SSH will ask you to accept the new key

ssh user@hostname.comWhen you reconnect, SSH will show:

The authenticity of host 'hostname.com (10.0.1.50)' can't be established.

ED25519 key fingerprint is SHA256:xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx.

Are you sure you want to continue connecting (yes/no/[fingerprint])?Verify the fingerprint before typing “yes” (see Verifying the New Key below).

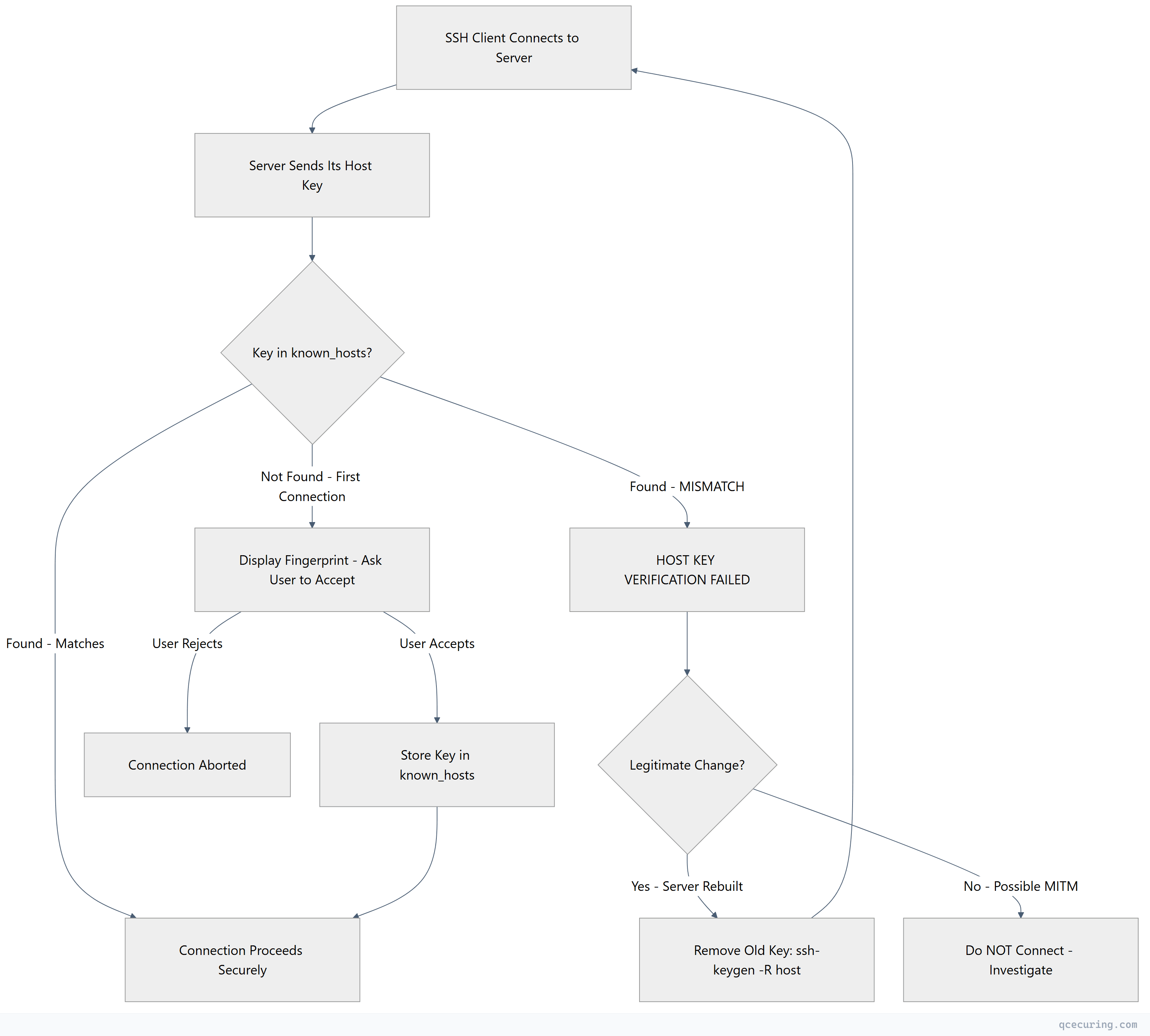

Host Key Verification Flow

Verify the New Host Key

Don’t blindly accept new host keys. Verify the fingerprint through an out-of-band channel:

Method 1 — check from the server console (if you have access):

# On the server, display all host key fingerprints

ssh-keygen -lf /etc/ssh/ssh_host_ed25519_key.pub

ssh-keygen -lf /etc/ssh/ssh_host_rsa_key.pub

ssh-keygen -lf /etc/ssh/ssh_host_ecdsa_key.pub

# Compare the output with what SSH showed you on the clientMethod 2 — ask your team/provider:

If someone else rebuilt the server, ask them to run the command above and send you the fingerprint. Compare it with what SSH is showing.

Method 3 — cloud provider console:

For AWS EC2, check the instance’s system log:

# Get the host key fingerprint from EC2 console output

aws ec2 get-console-output --instance-id i-1234567890abcdef0 --output text | grep -A1 "fingerprint"Method 4 — DNS SSHFP records (if configured):

# Check if SSHFP records exist for the host

dig SSHFP hostname.com

# Connect with SSHFP verification

ssh -o VerifyHostKeyDNS=yes user@hostname.comRemove the Old Key: All Methods

Method 1 — ssh-keygen -R (recommended):

# By hostname

ssh-keygen -R hostname.com

# By IP

ssh-keygen -R 10.0.1.50

# By hostname:port (non-standard port)

ssh-keygen -R "[hostname.com]:2222"

# By IP:port

ssh-keygen -R "[10.0.1.50]:2222"Method 2 — edit known_hosts directly:

The error message tells you the exact line number:

Offending key in /home/user/.ssh/known_hosts:47# Remove line 47

sed -i '47d' ~/.ssh/known_hosts

# Or on macOS

sed -i '' '47d' ~/.ssh/known_hostsMethod 3 — remove all keys for a host (hashed known_hosts):

If your known_hosts uses hashed entries (you see |1| at the start of lines), ssh-keygen -R is the only reliable method:

ssh-keygen -R hostname.comMethod 4 — nuclear option (clear entire known_hosts):

# Only do this if you understand the security implications

> ~/.ssh/known_hosts

# Or remove the file entirely

rm ~/.ssh/known_hostsThis forces re-verification of every server you connect to. Only appropriate for development machines, never for production jump hosts.

Common Scenarios

Server Was Rebuilt or Reimaged

This is the most common cause. When a server is rebuilt, it generates new SSH host keys.

# Remove old key and reconnect

ssh-keygen -R server.example.com

ssh user@server.example.com

# Verify the new fingerprint, then acceptTo preserve host keys across rebuilds (so clients don’t see this error):

# Before rebuilding, back up host keys

sudo tar czf /tmp/ssh_host_keys.tar.gz /etc/ssh/ssh_host_*

# After rebuilding, restore them

sudo tar xzf ssh_host_keys.tar.gz -C /

sudo systemctl restart sshdIP Address Changed to a Different Server

If DNS was updated to point to a different machine, the host key will be different.

# Check what IP the hostname resolves to now

dig +short hostname.com

# Remove the old entry

ssh-keygen -R hostname.com

# Reconnect and verify the new key belongs to the correct server

ssh user@hostname.comUsing Elastic IPs or Floating IPs

Cloud environments reuse IP addresses. A new instance may get an IP that was previously used by a different server.

# Remove by IP

ssh-keygen -R 203.0.113.50

# Reconnect

ssh user@203.0.113.50Load Balancer or Bastion Host Rotation

If you connect through a load balancer that routes to different backend servers, each backend has different host keys.

Fix — use the same host keys on all backends:

# Generate one set of host keys

ssh-keygen -t ed25519 -f ssh_host_ed25519_key -N ""

# Deploy to all backend servers

for server in backend1 backend2 backend3; do

scp ssh_host_ed25519_key* $server:/etc/ssh/

ssh $server "chmod 600 /etc/ssh/ssh_host_ed25519_key && systemctl restart sshd"

doneStrictHostKeyChecking Options

SSH’s StrictHostKeyChecking setting controls how host key verification behaves:

| Setting | Behavior |

|---|---|

yes | Refuse to connect if key doesn’t match or is unknown. Most secure. |

accept-new | Auto-accept keys for new hosts, reject changed keys. Good balance. |

no | Accept any key without verification. Insecure — avoid in production. |

ask (default) | Prompt the user for new hosts, reject changed keys. |

Configure in ~/.ssh/config:

# For trusted internal networks (auto-accept new, reject changes)

Host 10.0.*

StrictHostKeyChecking accept-new

# For development VMs that change frequently

Host dev-*.local

StrictHostKeyChecking no

UserKnownHostsFile /dev/null

LogLevel ERROR

# For production (strict — never auto-accept)

Host prod-*

StrictHostKeyChecking yesSecurity warning: Setting StrictHostKeyChecking no disables MITM protection entirely. Only use this for ephemeral development environments where you control the network. Never use it for production servers, jump hosts, or connections over untrusted networks.

Managing known_hosts at Scale

HashKnownHosts

By default, modern SSH hashes hostnames in known_hosts for privacy:

# Hashed entry (can't see which host it's for)

|1|abc123...|def456...= ssh-ed25519 AAAAC3...

# Unhashed entry (hostname visible)

server.example.com ssh-ed25519 AAAAC3...# ~/.ssh/config — control hashing

Host *

HashKnownHosts yes # Default on most systemsDistribute known_hosts via Configuration Management

Ansible:

# Collect host keys and distribute to all clients

- name: Gather SSH host keys

shell: ssh-keyscan -t ed25519 {{ item }}

loop: "{{ groups['all'] }}"

register: host_keys

- name: Distribute known_hosts

template:

src: known_hosts.j2

dest: /etc/ssh/ssh_known_hosts

mode: '0644'System-wide known_hosts:

# /etc/ssh/ssh_known_hosts — applies to all users on the machine

# Managed centrally, users can't override

# Scan and add all your servers

ssh-keyscan -t ed25519 server1.example.com server2.example.com >> /etc/ssh/ssh_known_hostsSSH Certificate Authority (Best Solution for Scale)

Instead of managing known_hosts entries for every server, use an SSH CA to sign host keys:

# 1. Create a host CA key (do this once)

ssh-keygen -t ed25519 -f host_ca -C "Host CA"

# 2. Sign each server's host key

ssh-keygen -s host_ca -I "server1.example.com" -h -n "server1.example.com,10.0.1.50" /etc/ssh/ssh_host_ed25519_key.pub

# 3. Configure servers to present the signed key

# /etc/ssh/sshd_config:

HostCertificate /etc/ssh/ssh_host_ed25519_key-cert.pub

# 4. Configure clients to trust the CA

# ~/.ssh/known_hosts or /etc/ssh/ssh_known_hosts:

@cert-authority *.example.com ssh-ed25519 AAAAC3... (host CA public key)With this setup, clients trust any server whose host key is signed by your CA. No more known_hosts management for individual servers.

Automation and CI/CD Considerations

GitHub Actions / CI pipelines

# Add known host keys in CI to avoid interactive prompts

- name: Add SSH known hosts

run: |

mkdir -p ~/.ssh

ssh-keyscan -t ed25519 deploy-server.example.com >> ~/.ssh/known_hosts

chmod 644 ~/.ssh/known_hostsDocker containers

# Add known hosts at build time

RUN mkdir -p /root/.ssh && \

ssh-keyscan -t ed25519 github.com >> /root/.ssh/known_hosts && \

chmod 644 /root/.ssh/known_hostsAnsible

# Disable host key checking for dynamic inventory (cloud environments)

# ansible.cfg:

[defaults]

host_key_checking = False

# Better: use ssh_known_hosts module

- name: Accept host key

known_hosts:

name: "{{ inventory_hostname }}"

key: "{{ lookup('pipe', 'ssh-keyscan -t ed25519 ' + inventory_hostname) }}"

state: presentTroubleshooting Edge Cases

Multiple keys for the same host

# If a host has multiple key types, remove all of them

ssh-keygen -R hostname.com

# This removes all key types (ed25519, rsa, ecdsa) for that hostknown_hosts file permissions

# known_hosts should be readable by the user

chmod 644 ~/.ssh/known_hosts

# If SSH complains about permissions

chmod 700 ~/.ssh

chmod 644 ~/.ssh/known_hostsHost key changed on a non-standard port

# The known_hosts entry includes the port for non-standard ports

# Format: [hostname]:port

ssh-keygen -R "[hostname.com]:2222"ProxyJump / bastion host issues

# If the error is for the bastion, not the target

ssh-keygen -R bastion.example.com

# If the error is for the target (through the bastion)

ssh-keygen -R target-server.internalFAQ

Is it safe to just delete the old key and accept the new one?

Only if you can explain why the key changed. Legitimate reasons: server was rebuilt, OS reinstalled, host keys rotated, IP reassigned to a new machine. If none of these apply, do NOT accept the new key — investigate first. A changed host key with no explanation is exactly what a man-in-the-middle attack looks like.

How do I prevent this error when auto-scaling creates new instances?

Use one of these approaches: (1) SSH Certificate Authority — sign all host keys with a CA, clients trust the CA. (2) Pre-bake host keys into your AMI/image so all instances from the same image have the same key. (3) Use StrictHostKeyChecking accept-new for auto-scaled environments. (4) Publish host key fingerprints via DNS SSHFP records.

Why does this happen every time I recreate a Docker container or Vagrant VM?

Ephemeral environments generate new host keys on every creation. Solutions: (1) Mount persistent host keys into the container. (2) Use StrictHostKeyChecking no with UserKnownHostsFile /dev/null for development only. (3) Use a fixed set of host keys in your Dockerfile or Vagrantfile.

Can I use the same SSH host key across multiple servers?

Yes, and this is appropriate for: load-balanced servers behind the same hostname, auto-scaled instances, and disaster recovery pairs. Generate one host key and deploy it to all servers that share the same DNS name. This prevents the verification error when the load balancer routes to a different backend.

What’s the difference between host keys and user keys?

Host keys identify the server to the client (stored in known_hosts). User keys identify the client to the server (stored in authorized_keys). The “host key verification failed” error is about the server’s identity, not your authentication. Even if your user key is correct, SSH won’t let you connect if it can’t verify the server’s identity.

Related Reading

- SSH Key Management Enterprise Guide — manage SSH keys at scale

- Fix ‘Permission Denied (publickey)’ SSH Error — fix authentication issues after resolving host key problems

- Best SSH Key Management Tools 2026 — tooling for host key management

- Secrets Management vs Key Management — understand the broader key management landscape